disperse_d

A Kubernetes-native control plane that turns raw GPUs across providers into consistent AI clusters running Slurm, Ray and other industry-standard ML tools.

Built for the Hard Parts of AI Infrastructure.

disperse_d automates the hardest parts of running AI clusters based on Slurm or Ray across distributed GPU infrastructure — provisioning schedulers, moving data, enforcing governance, and operating clusters consistently on Kubernetes.

Pre-Flight Infrastructure Validation

Deep infrastructure checks before cluster deployment. Validates networking, storage, GPUs, and runtime dependencies — and automatically fixes common issues.

Automated Cluster Provisioning

Turn any Kubernetes GPU fleet into a production AI cluster. disperse_d deploys schedulers like Slurm or Ray and configures networking, policies, and runtimes automatically.

Unified Multi-Provider Control

Manage clusters across clouds and regions from one control plane. Standardize provisioning and operations via UI, API, or Terraform.

High-Performance Data Mover

High-throughput dataset transfer from S3-compatible storage to AI clusters. On-demand synchronization ensures training jobs always have the data they need.

Governance & Capacity Allocation

Allocate GPU capacity across teams with quotas and fair-share policies. Integrates with LDAP and Active Directory for enterprise identity.

Your AI infrastructure across providers managed through unified Kubernetes-native APIs

disperse_d is built for infrastructure teams.

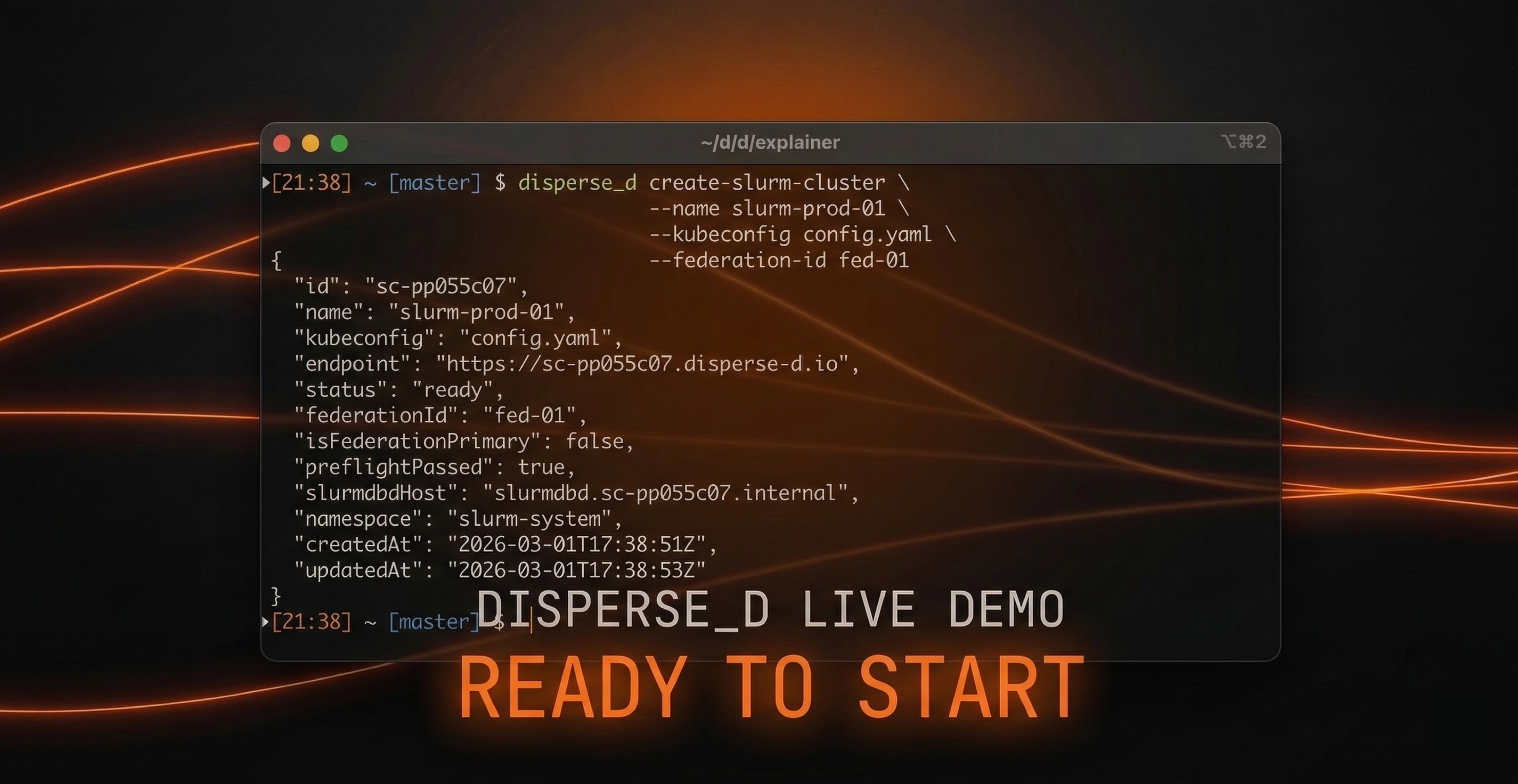

Provision clusters, synchronize data, monitor health, and govern capacity through a simple API or CLI.

Integrate disperse_d into existing automation workflows, internal platforms, or Terraform infrastructure.

Turn any Kubernetes-backed GPU fleet into a production-ready AI cluster. disperse_d deploys standard schedulers like Slurm or Ray and configures networking, policies, and runtime automatically.

Move datasets from any S3-compatible storage directly to your AI clusters. Fast, repeatable data synchronization ensures training jobs always have the data they need.

Define organizational hierarchy, sync it from LDAP or Active Directory, and assign fair-share GPU allocations across teams and projects.

Built-in health checks continuously validate GPUs, nodes, and runtime stability. disperse_d automatically identifies failing nodes, cordons them, and drains workloads to keep clusters healthy.